Author: Damian Player

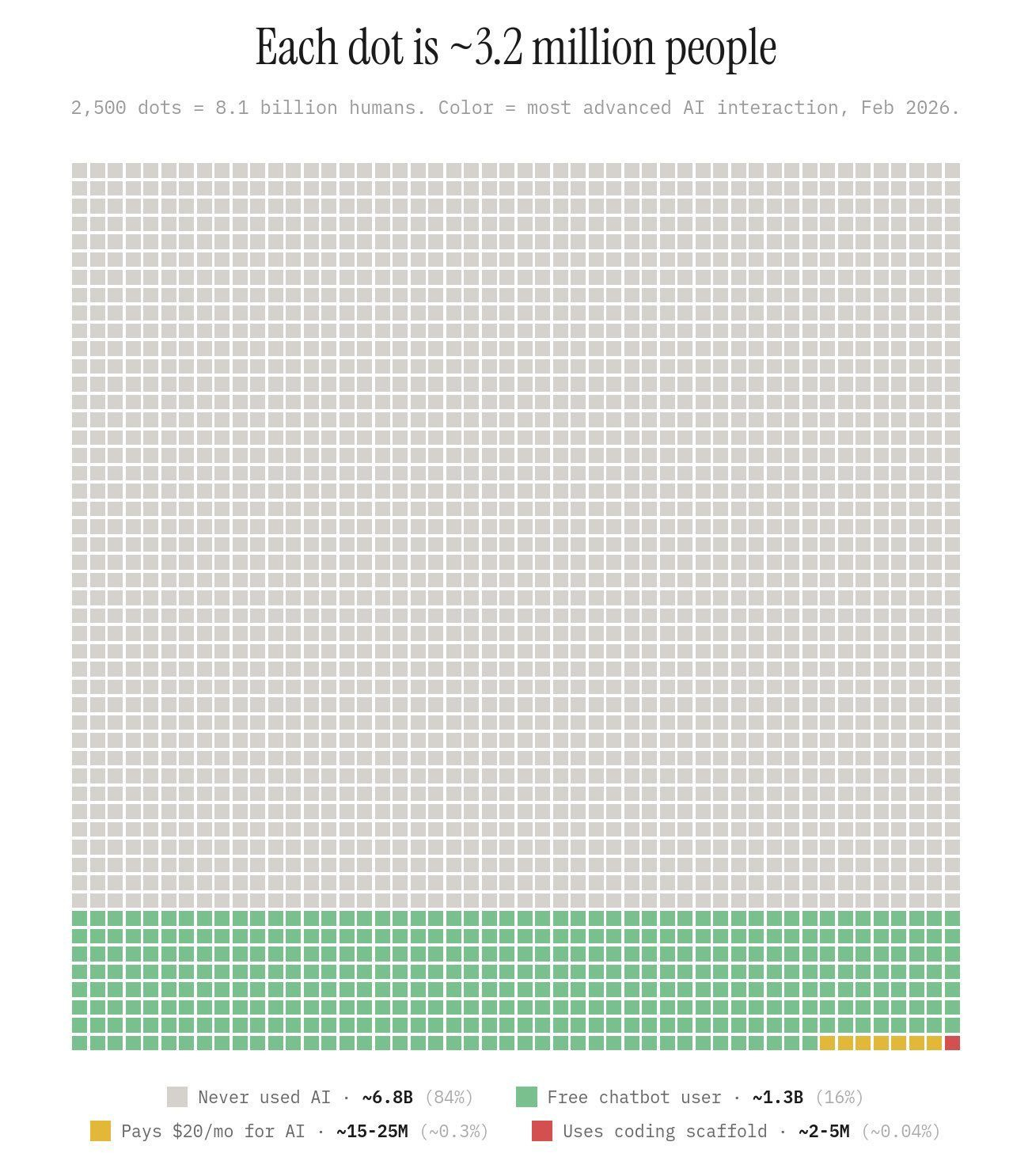

A single image has been circulating widely in AI and creative technology communities over the past month. It shows 2,500 dots, each representing roughly 3.2 million people, colour-coded by their level of AI engagement. The sea of grey is vast: 84% of humanity, around 6.8 billion people, who have never used a chatbot. A band of green at the bottom represents the 1.3 billion who use free tools. A thin yellow sliver marks the 15–25 million paying around $20 a month. And a tiny dot of red indicates the 2–5 million using AI to an advanced level on agentic platforms such as Cursor, Google Antigravity and Claude Code.

If you are reading this article about AI in AV and immersive experience design, you are almost certainly somewhere in that yellow sliver, or in the red dot entirely. By any global measure, you are an early adopter. Probably an innovator.

That context matters. Industry surveys capture the broad landscape of AI engagement across the sector honestly and usefully. What they cannot fully capture, because they are not designed to, is the specific, fast-moving conversation happening inside studios, virtual production (VP) facilities, and tight-knit creative communities working at the bleeding edge of technology and live experience.

In experiential AV systems/workflow design and immersive content creation, the pace is different. Projects run to brutally tight windows. Installations must perform flawlessly in front of hundreds, thousands or millions of people, potentially in venues with finite and constrained technical support. Content must be visually spectacular and brand-accurate. Budgets are always under sustained pressure. And the client is no longer a passive recipient of concepts, they are increasingly arriving with AI-generated ideas of their own, having iterated overnight on the same tools that we use professionally.

From Tool to Production Partner: Agentic AI in Immersive Workflows

For most of the past two years, practitioners across the creative and immersive industries have used AI as a sophisticated assistant: a tool that responds to prompts, accelerates research, generates first-draft content. That model is already obsolete at the more technically advanced end of the experiential community.

The shift is towards what engineers call ‘agentic’ AI, systems that do not wait for prompts but orchestrate complex, multi-step pipelines autonomously. The distinction matters. “AI suggests, human executes” is the old model. “Human defines creative intent, agent orchestrates the pipeline” is the new one.

Dr John O’Hare delivering his “VisionFlow Agent Collaboration” talk in Manchester

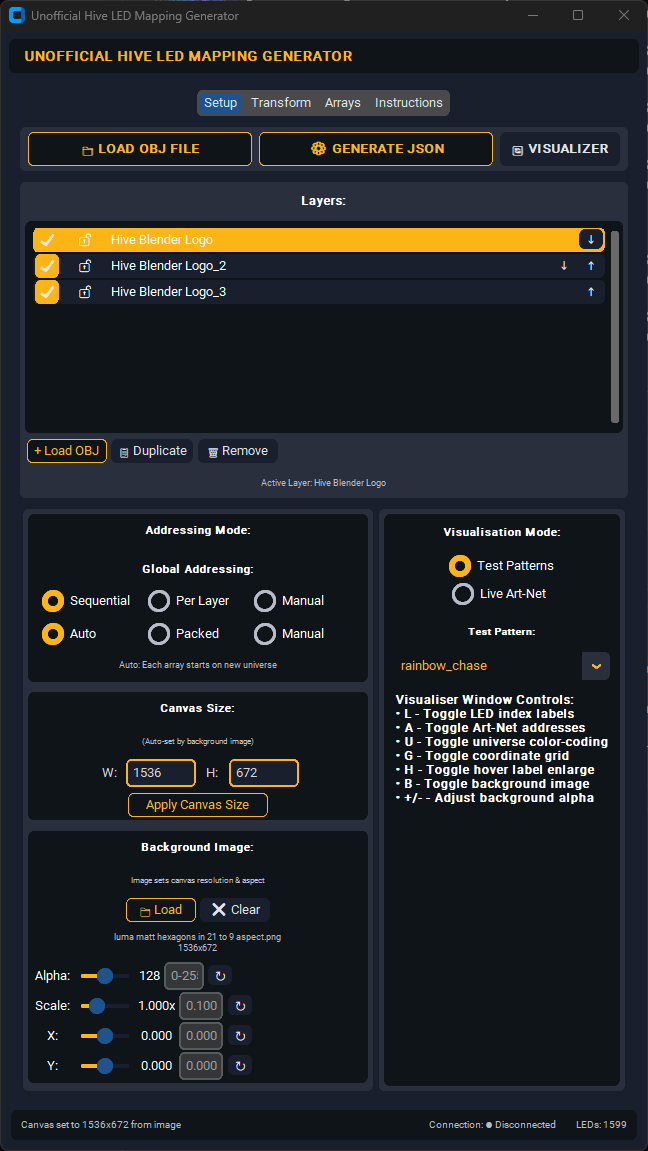

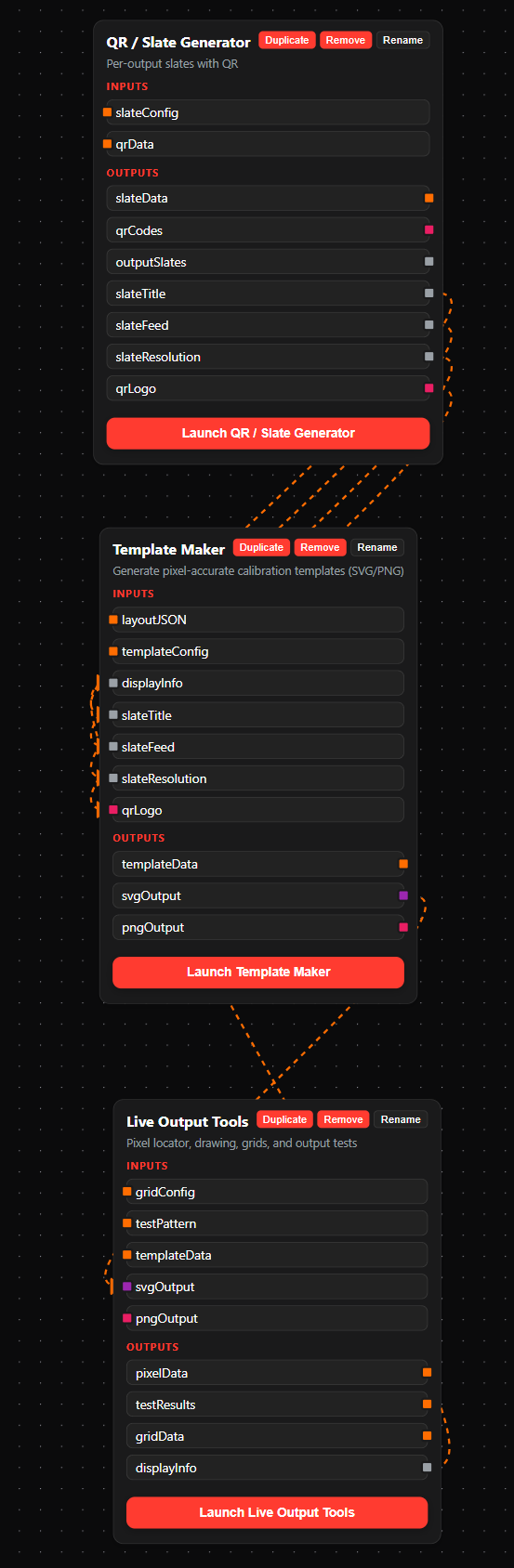

Dr John O’Hare, an AI researcher and agentic AI architect whose DreamLab AI operation in the Lake District in the UK functions as both a training facility and an active R&D lab, has spent the past two years building what he affectionately refers to as “Jarvis” but is actually an open source agentic system called VisionFlow: a headless agentic system capable of, amongst other things, acting as an orchestrator connecting ComfyUI, Blender, FLUX.2, and SAM3D into a single autonomous pipeline. A text prompt goes in; VisionFlow’s preferred internal flow runs through Trellis to GLB direct, the asset lands already set up inside Blender, and a full project, structured with documentation, gets pushed to a GitHub repository. One test pipeline completed a full 3D elephant model in 31 seconds once the supporting models were cached. Critically, because everything is committed to version control, you get collaboration, provenance, and date stamps for free, baked into the workflow rather than bolted on afterwards. “When everyone realises it’s this easy,” he notes, “things are going to get well weird.”

For the experiential sector, the implication is immediate. An agentic pipeline can overnight prototype an entire scene for a branded installation, generate variants on a visual direction, or build preliminary 3D assets for client pre-vis work that previously occupied days of designer time, or was simply cut for budget.

The shift to AI-powered coding environments; Cursor, Google Antigravity, Claude Code, that function as active development partners have already transformed software creation pipelines for rapid development projects. Projects previously declined for budget or timeline risk reasons become deliverable. The compounding effect on what a small, high-skilled team can produce is significant.

The key distinction the community is drawing is between “vibe coding”, throwing prompts at an AI and hoping something useful emerges and what practitioners are calling “agentic engineering”: deliberate system design, careful architectural planning, and structured creative direction, with AI handling the complex production steps. The former often leads to inconsistent, hard-to-maintain results, while the latter is redefining what a small studio and a single individual can realistically achieve and consistently replicate.

In my own practice, I look for applications to support my technical and creative tasks as I always have, however in recent years I now also instinctively and simultaneously employing agentic workflows to see if such tools can be generated or adapted specifically to fit my needs for each project I work on. More often than not I now find I just end up making these tools myself, tailored for each task and all done while I’m doing other work with my voice.

The emerging professional profile combines deep domain expertise as a director, AV technician, 3D artist, or experience designer with the ability to orchestrate agentic AI tooling. Not a developer who has learned to make experiences, but an experience maker who has learned to orchestrate AI. That combination is, right now, genuinely rare and genuinely valuable.

Virtual Production Meets Brand Experience: The Commercial Frontier

Virtual Production (VP): using large-scale LED volumes as dynamic, photorealistic in-camera backgrounds has been a film industry workflow for several years. It is becoming a brand campaign story now.

AI is the driver. VP LED volumes that previously required expensive, hand-built Unreal Engine environments, weeks of 3D art direction and technical authoring, can now be fed by AI-generated content pipelines. The cost and lead time for bespoke visual environments has dropped significantly.

THG Studios’ World’s First AI-Driven Immersive, Shoppable Catwalk

In February 2026, THG Studios, working with members of the DreamLab AI team, set a WRCA (World Record Certification Agency) record for the World’s First AI-Driven Immersive, Shoppable Catwalk. AI-generated environments were served in near-real-time to a large VP LED volume while live models walked in front of them, with the content integrated into a live commerce layer allowing the audience to purchase what they were seeing. The full project infrastructure, true to DreamLab’s methodology, was documented and committed to a public GitHub repository. What made it technically significant was not any single component, it was the integration of a generative AI pipeline, VP hardware, and real-time commerce into a unified live brand experience, with every build decision traceable and reproducible from the first commit.

Real-Time Adaptive Experiences: AI That Responds to the Room

Most AI-generated experiential content is still pre-produced: rendered, approved, delivered to a media server, played back on a schedule. The next frontier, already being funded, prototyped, and in some cases deployed, is content that changes in response to who is in the room.

Real-time adaptive experience design uses sensor inputs, audio, visual, biometric, behavioural, environmental, to feed AI systems that modify the experience continuously: adjusting lighting, shifting the narrative arc of an installation, responding to crowd density or individual visitor behaviour.

The critical challenge for permanent or touring installations is this: consumer AI is designed to be novel, unpredictable, generative. Permanent installations require the opposite: deterministic behaviour, guaranteed uptime, robust fallback protocols, and locked creative parameters signed off by client. An installation centrepiece that behaves unexpectedly on opening day is not a failed experiment. It is a reputational and potentially contractual risk.

Any studio moving into real-time adaptive design must treat AI reliability engineering, not just creative AI, as a core competency. The hardware ecosystem also needs to catch up: real-time generative content requires edge computing with serious GPU capability, not traditional media servers. This is not a future requirement. It is a current one.

Who Adapts and Who Doesn’t: The Skills and Economics Reality Check

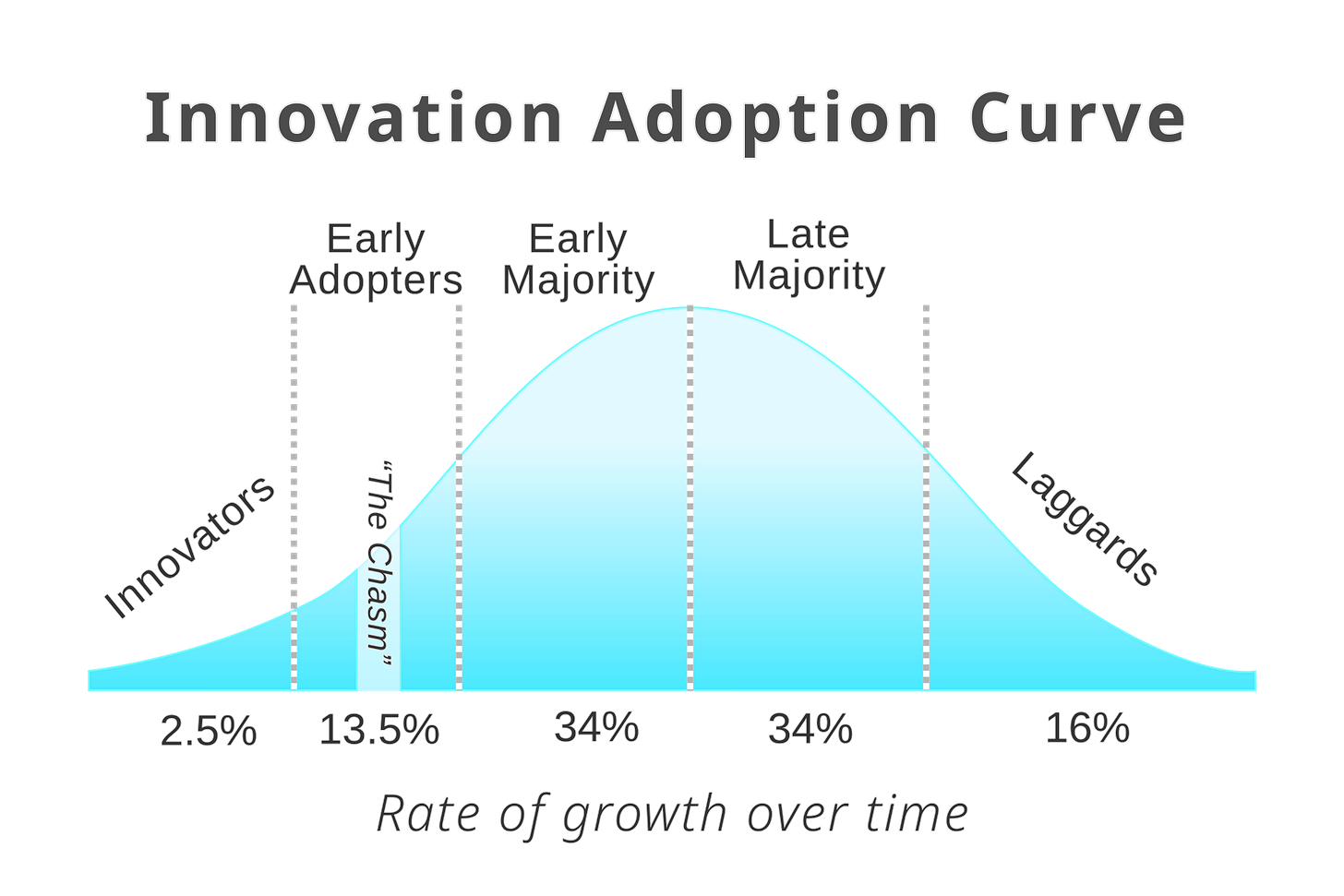

The most honest conversation happening in the experiential AI community is not about technology. It is about people and it maps almost precisely onto the classic technology adoption curve.

The framework, first articulated by Everett Rogers and widely used since, divides any new technology’s user base into five groups: Innovators (2.5%), Early Adopters (13.5%), Early Majority (34%), Late Majority (34%), and Laggards (16%). Each group has a fundamentally different relationship with novelty, risk, and proof. Innovators test ideas before benefits are clear. Early Adopters are visionaries who influence others and tolerate risk. The Early Majority are pragmatists who wait for proven results. The Late Majority adopt only when most have. Laggards resist until adoption is unavoidable.

The dot chart referenced at the opening of this piece makes plain where that curve sits globally in early 2026. The 2–5 million people using agentic code platforms, the red dot, are the world’s Innovators for this technology cycle. The 15–25 million paying subscribers for conversational AI platforms, like Gemini, Claude and ChatGPT are its Early Adopters. The 1.3 billion free users of those conversational platforms represent the leading edge of the Early Majority. And 84% of humanity, 6.8 billion people, have not yet engaged at all.

The “AI revolution” being discussed intensely in trade press, online social channels, at industry events, is a conversation between the Innovators and Early Adopters. It is, in the author Damian Player’s framing an “AI bubble”, an echo chamber: a community of perhaps 2–5 million people whose experience is entirely unrepresentative of the broader market or the broader workforce. When someone in that bubble says “AI is everywhere,” they mean: AI is everywhere in ‘my’ world.

Step outside that bubble and most businesses still operate on spreadsheets. Most professionals have never written a prompt. Most of the world’s work is being done the way it was done five years ago.

For the experiential sector, this has two direct implications.

The first is that the early-adopter advantage is real and still substantial. The compounding of capability that comes from genuine, daily engagement with agentic tooling; building, breaking, learning, rebuilding, is not widely distributed. Those investing seriously now are not just keeping current. They are creating a lead that narrows only for those who start soon. The gap between an active Innovator and a well-intentioned observer is widening by the week.

The second is that innovation is contagious. The adoption curve framework has a crucial mechanism: Innovators act as what might be called “super-spreaders.” When one person inside a studio or team genuinely leverages a new tool or workflow and demonstrates its output as its champion with the studio, they lower the conceptual barrier for everyone around them. They infect colleagues with evidence of what is possible. The more Innovators a team contains, the faster the transition from novelty to practice to standard moves. Studios building serious AI capability are not just developing a technical advantage for themselves, they are reshaping the expectations and culture of everyone they work alongside and their sector.

The learning curve is real and the tool landscape is wide. Weavy allows a graphic designer to move from sketch to image to video mock-up without After Effects expertise using a simplified node based system to build repeatable workflows. ComfyUI, at the more technical end, allows those willing to invest in learning its node-based workflow to build custom pipelines for specific production tasks. Full opensource agentic systems like Jarvis (AKA VisionFlow) require real engineering expertise but are increasingly accessible through pre-built Docker deployments and community-maintained tooling. There is an on-ramp at every level. The barrier is time and the perceived value of investing it, not technical aptitude.

AI is lowering the barrier for clients and competitors to produce content independently while also raising expectations for creative sophistication across the market.

Mid-tier creative studios who once relied on technical complexity as their competitive moat now find themselves the most exposed. The studios doing well are those who understand what they are actually selling: the craft, judgement, and directed creative vision that converts AI outputs into experiences that move people. That capability cannot be automated. It can only be sharpened and it is sharpened by engaging seriously with the tools rather than keeping them at arm’s length.

A Note on Open Source and Platform Risk

Studios building AI-assisted workflows should note one further risk: platform dependency.

Practitioners across the creative technology community are all too familiar with having to rebuild digital experiences from scratch when platforms they had built deliverables on were acquired; 8th Wall, Ready Player Me and others, changed terms, altered pricing, or discontinued features. The same risk exists in the AI landscape. Building production workflows on commercial API-dependent platforms means building on shifting ground.

The community is increasingly developing a preference for open-source foundations: models and tools whose behaviour is fixed, whose terms cannot change overnight, and whose operation is not contingent on a third party’s commercial decisions. This is a more technically demanding path. It is also the more resilient one for studios building long-term production capability.

Commercial and creative advantage though sustained AI levelling up in the experiential and immersive sectors is not a future concern. It is a current reality, advancing faster than most traditional commentary platforms acknowledge. The studios that will thrive are those that engage with specific, genuine depth and commitments of R&D resources, not those that adopt the vocabulary without the practice.

Best,

Simon Graham

https://www.simongraham.tech/